Key Takeaways

- High-availability (HA) is shifting from reactive disaster recovery to proactive, self-healing autonomous systems.

- Observability will move beyond metrics toward predictive AI-driven anomaly detection to prevent downtime before it occurs.

- The adoption of chaos engineering as a standard CI/CD practice is non-negotiable for 99.999% uptime.

- Serverless and edge computing will redefine latency and fault tolerance benchmarks.

- Technical debt remains the primary silent killer of HA systems; managing it early is vital for long-term scalability.

The landscape of software engineering is undergoing a tectonic shift as we move deeper into the cloud-native era. For high-growth startups and enterprises alike, downtime is no longer just an inconvenience; it is a direct threat to brand equity and revenue. Our predictions for the next five years center on a radical move toward automated resilience.

What will define the next generation of cloud-native resilience?

Resilience in 2025 and beyond will be defined by the transition from human-operated recovery to autonomous orchestration. We are seeing a move away from static infrastructure toward systems that anticipate failure. Engineers are no longer just building for the "happy path"; they are building systems that actively expect chaos.

Consider these core shifts in architecture:

- Autonomous Self-Healing: Kubernetes operators and AI-driven control planes will autonomously roll back deployments or shift traffic without manual intervention.

- Predictive Auto-scaling: Moving from reactive CPU-based scaling to AI-driven models that predict traffic spikes based on historical patterns.

- Edge Failover: Distributing state across global edge nodes to ensure that a regional cloud outage does not take down the entire application.

Resilience is not a feature; it is an architectural baseline that must be baked into every microservice interaction.

To avoid the pitfalls of early-stage growth, companies must be diligent about how they structure their backend services. Addressing these issues early is critical, as discussed in our guide on Scaling Architecture: 5 Patterns to Prevent Technical Debt. Without proper modularization, high availability becomes an impossible goal.

How will observability evolve to meet modern uptime demands?

In a cloud-native world, traditional logs and basic metrics are insufficient. Our predictions suggest a transition toward "AIOps"—where machine learning models digest vast streams of telemetry to identify the root cause of an issue before it impacts the end-user.

The next-gen observability stack will include:

- Distributed Tracing by Default: Every request will be traceable through its entire lifecycle, across internal and external microservices.

- Contextual Anomaly Detection: Tools that distinguish between benign latency and actual system degradation based on real-time traffic analysis.

- Automated Root-Cause Remediation: Systems that trigger automated runbooks immediately upon detecting an incident, cutting MTTR (Mean Time To Recovery) by 90%.

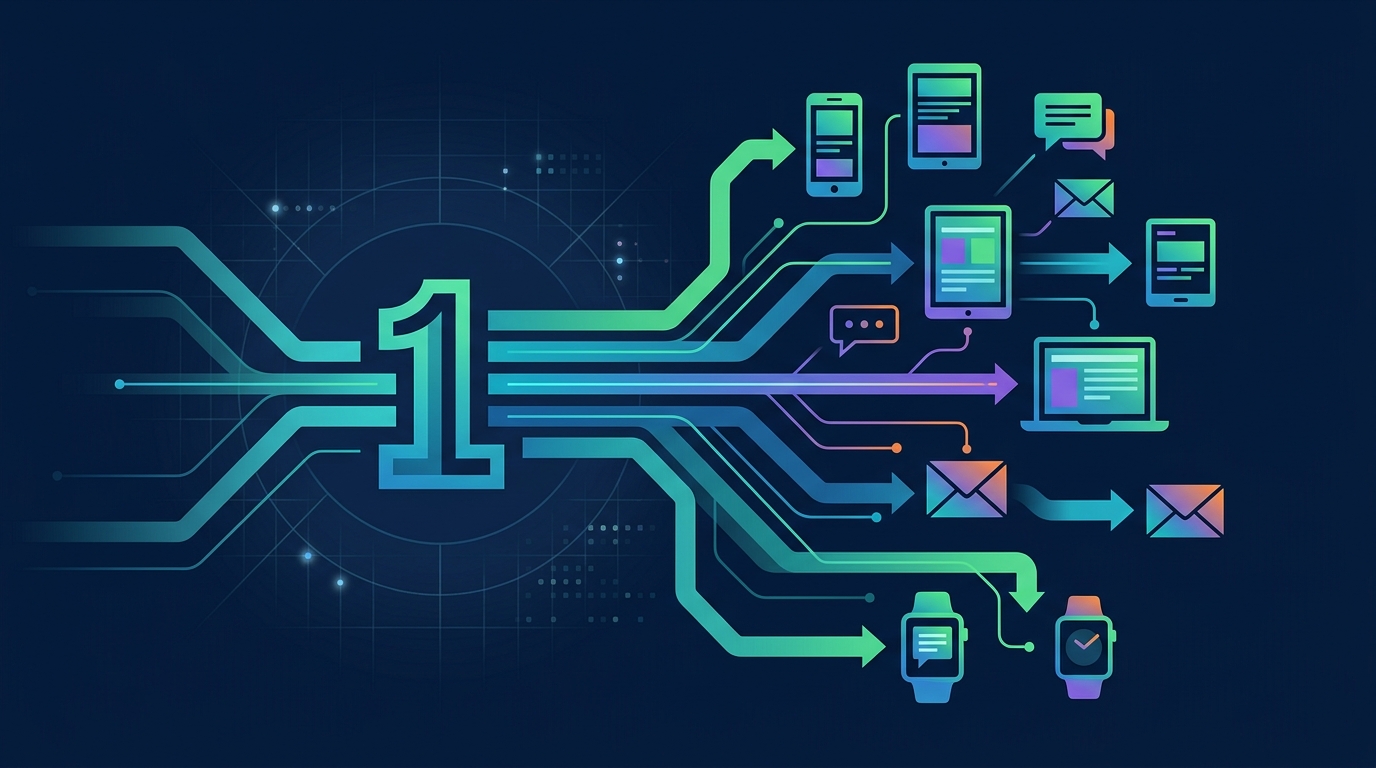

This evolution is particularly crucial for companies managing complex, multi-layered infrastructures. As noted in 2025 Messaging Trends: Why Unified APIs are the New Standard, modern architectures rely heavily on external integrations that must be monitored with the same rigor as internal services.

Why is chaos engineering becoming the standard for high-availability?

Gone are the days when testing was limited to unit tests and integration suites. High-availability systems now require chaos engineering, a methodology championed by pioneers at Netflix to proactively test system robustness by intentionally breaking components in production.

Key pillars of a robust chaos-testing strategy include:

- Network Partition Simulation: Forcing service-to-service communication to fail to test circuit breaker logic.

- Resource Exhaustion Drills: Simulating memory leaks or CPU spikes to ensure auto-scaling triggers properly.

- Dependency Failure Testing: Breaking third-party APIs to ensure that primary application flow remains functional or degrades gracefully.

Without these active tests, you are essentially flying blind. Engineering teams must adopt a mindset where the system is constantly tested against its own boundaries.

How can engineering teams prepare for the shift toward multi-regional availability?

The demand for true 99.999% uptime (five-nines) is forcing a shift toward multi-regional, active-active architectures. This is an expensive but necessary transition for platforms scaling globally. Our predictions indicate that multi-cloud strategies will become the gold standard to mitigate provider-specific outages.

Strategies for achieving global HA include:

- Database Replication: Utilizing globally distributed databases like CockroachDB or AWS Aurora Global to handle cross-region latency.

- Traffic Routing: Using intelligent DNS and CDN services to route users to the healthiest node in real-time.

- Data Sovereignty Compliance: Integrating regional compliance checks into the architectural design to manage user data legally across global nodes.

The reality is that these systems are complex to design and even harder to maintain. If your roadmap is stalled, it is often because your team is wrestling with the foundational constraints of your current infrastructure rather than building new features.

Stop stalling your product roadmap with technical bottlenecks and let Renbo Studios accelerate your development with high-availability systems and expert-level integration. We specialize in building the resilient, cloud-native backbones that modern businesses require to survive and thrive.

Visit renbostudios.com today to scale your platform faster with our dedicated engineering lab. Our team is ready to help you turn your technical debt into an architectural advantage, ensuring your system is built for the challenges of tomorrow.

Comments