Key Takeaways

- Self-healing autonomous systems will soon replace manual incident response protocols.

- Edge computing will become the primary architecture for reducing latency in global applications.

- Data consistency models will shift toward "intent-based" rather than "state-based" infrastructure.

- Security will move from the perimeter to the micro-segmentation of every individual process.

- High-availability is no longer a luxury for startups; it is a fundamental requirement for market survival.

The modern digital landscape is relentless. As businesses scale, the pressure to maintain 99.999% uptime moves from a goal to a baseline requirement. We are entering an era where downtime is measured in lost reputation and immediate revenue evaporation.

Understanding the future of infrastructure requires looking beyond today's cloud patterns. We have analyzed current trends to provide 5 predictions that will define the next decade of engineering.

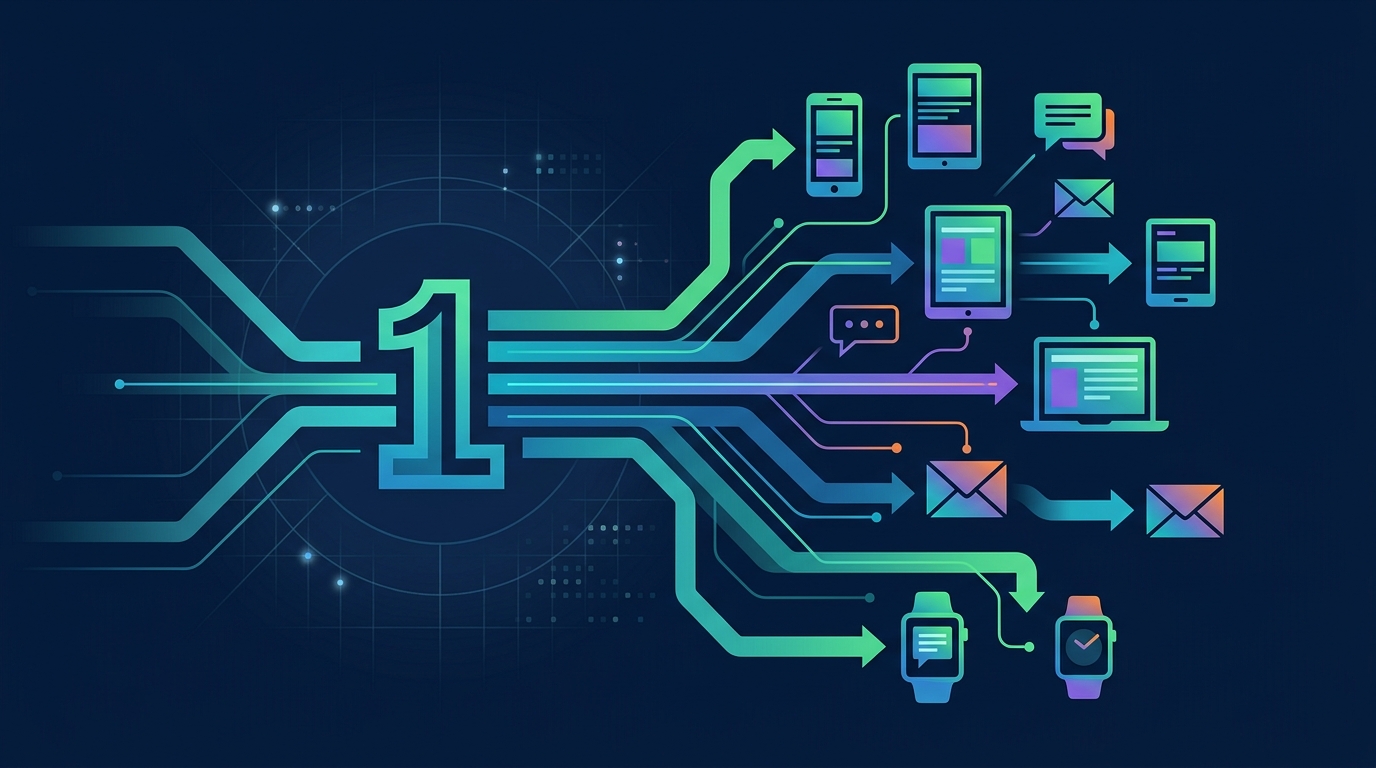

1. How Will AI-Driven Autonomous Remediation Change Operations?

The manual "pager-on-call" model is becoming obsolete. We predict that AI-driven autonomous systems will perform the vast majority of infrastructure remediation without human intervention.

- Anomaly Detection: Systems will identify spikes in memory usage before they lead to OOM (Out of Memory) crashes.

- Automated Rollbacks: Infrastructure will automatically revert to the last stable state upon detecting anomalous performance metrics.

- Predictive Scaling: Resource allocation will happen based on historical traffic patterns rather than reactive threshold triggers.

AI-driven infrastructure can reduce Mean Time to Recovery (MTTR) by an estimated 70% compared to traditional manual investigation methods.

As you plan your long-term growth, remember that scaling architecture is about anticipating failure, not just preventing it. By building these feedback loops today, you ensure your platform stays resilient as it grows.

2. Why Is Edge Computing Becoming the New Standard for Availability?

Centralized cloud regions are a single point of failure and a latency bottleneck. The shift toward Edge computing moves the compute power closer to the user, ensuring higher availability even during regional cloud outages.

- Reduced Propagation Delay: Distributing state globally minimizes the impact of localized ISP failures.

- Offline Resilience: Localized edge nodes allow applications to function in a degraded state when central connectivity is lost.

- Compute Density: Modern edge platforms allow for containerized workloads to execute at the network periphery.

Reference the official Google Cloud Edge Computing documentation to see how hardware-level distributed systems are changing the deployment paradigm. Relying on a single region is a risk that most funded startups can no longer afford to take.

3. How Will "Intent-Based" Infrastructure Replace Manual Configuration?

We are moving away from imperative scripts toward declarative, intent-based infrastructure. Engineers will describe the desired outcome, and the infrastructure will reconcile its state to match that reality.

- Declarative Synchronization: Continuous reconciliation loops ensure the system never drifts from its production configuration.

- Abstracted Complexity: Infrastructure-as-Code (IaC) will become more human-readable, reducing the potential for human error.

- System Integrity: Automated systems will instantly flag unauthorized changes or "configuration drift" within your environment.

For startups, managing these configurations effectively is key to avoiding wasted burn. Our team has outlined top cloud cost optimization strategies to ensure that your automated infrastructure doesn't inadvertently inflate your monthly bill.

4. Will Zero-Trust Architecture Become the Default for Availability?

Security is now synonymous with availability. If a breach occurs, the resulting system lockdown can cause as much downtime as a server failure.

- Micro-segmentation: Every service, pod, and function will operate in a strictly isolated environment.

- Identity-Centric Access: Trust is never granted based on network location; it is earned via continuous authentication.

- Lateral Movement Prevention: By limiting service communication, you prevent a single compromised endpoint from taking down your entire infrastructure.

Adopting a zero-trust model is no longer optional for high-growth enterprises. It is the most effective way to protect your uptime against modern, sophisticated cyber threats.

5. How Should Startups Approach These Predictions Today?

The pace of change can be paralyzing. Many engineering teams spend more time debating the future than building for it, leading to a stagnant product roadmap that fails to deliver value to customers.

Stop stalling your product roadmap with technical bottlenecks and let Renbo Studios accelerate your development with high-availability systems and expert-level integration. Our team specializes in bridging the gap between engineering strategy and scalable execution, ensuring your infrastructure is built for the challenges of tomorrow.

Visit renbostudios.com today to scale your platform faster with our dedicated engineering lab. We turn complex infrastructure predictions into stable, production-ready reality for funded startups and enterprises.

Comments